Yesterday, the second Open Technology Fund pentest I have done was published. That means I can finally share some results of my exploration in the intersection of AI and security, namely using AI for pentesting!

Background

First, a brief background. During 2024 I conducted two pentests (manually, no AI) of open source applications via Open Technology Fund. The first one was Uwazi (report available), a security critical system for managing eyewitness videos, testimonies, and other human rights documentation for human rights defenders, Journalists, activists, and researchers. Uwazi had been pentested three times previously.

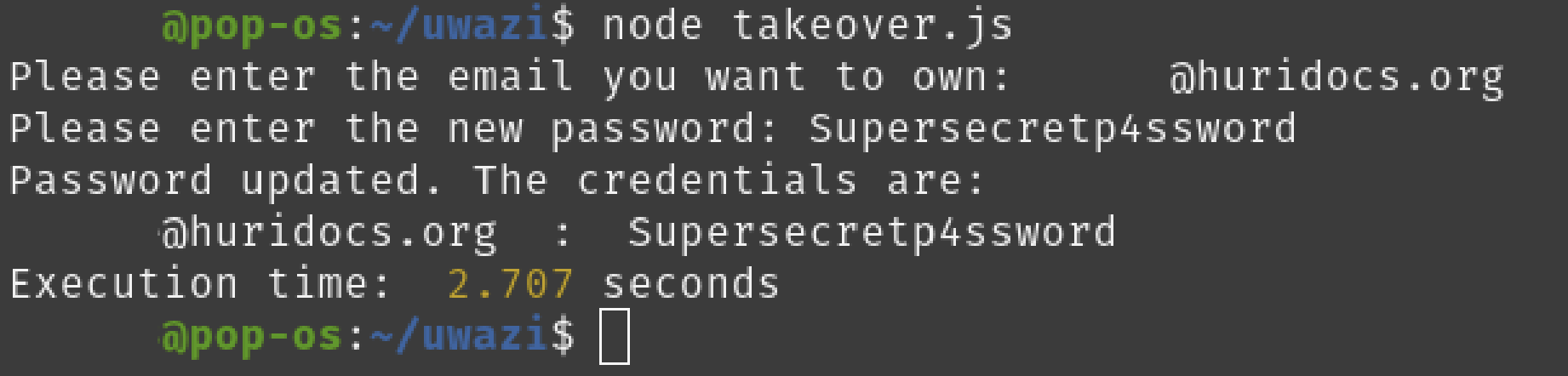

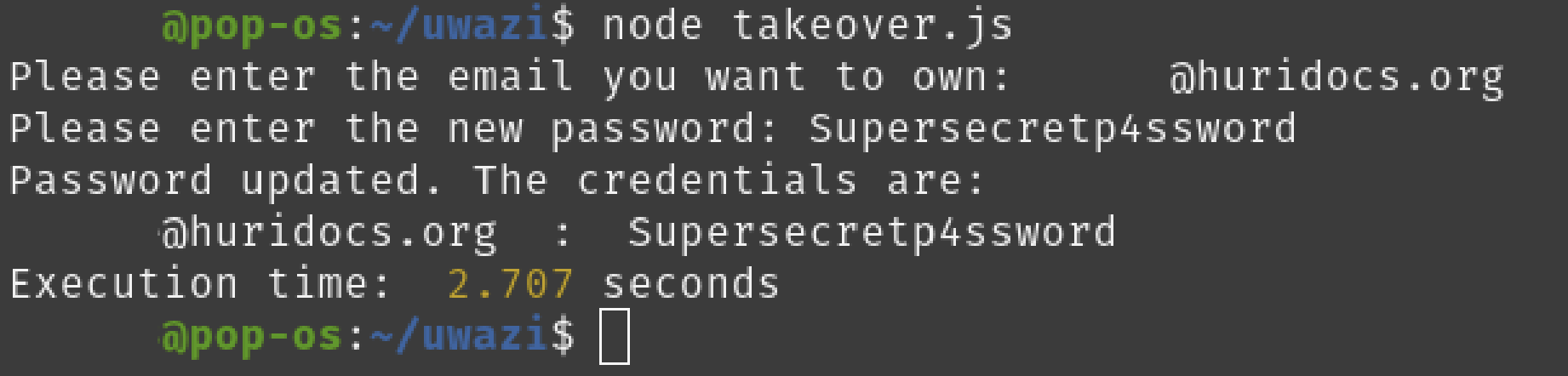

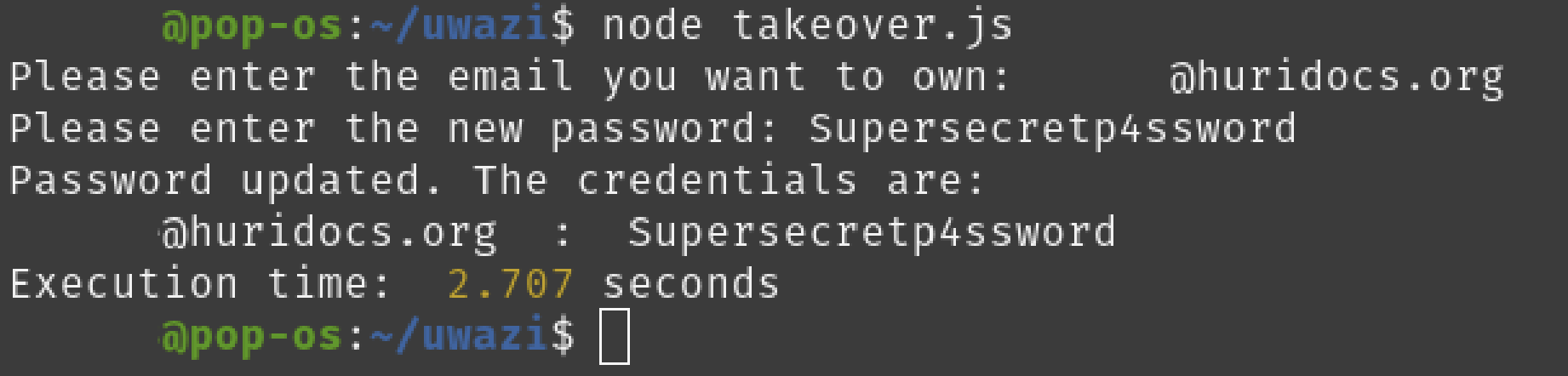

One of the discovered vulnerabilites was a zero click account takeover via a password reset flaw. The fundamental issue was that the reset token was generated by hashing the email and the current unix time stamp, which meant that the attacker only had to try resetting the password using every possible unix time stamp from when they sent the password reset request to when they received the response from the server. In practice this means a few hundred attempts, which translates to a few seconds. When the right token value was tried, the attacker could set the password of the account to whatever they wanted.

The account takeover via password reset

The account takeover via password reset

Always use a secure random function to generate password reset tokens!

Always use a secure random function to generate password reset tokens!

The second system we pentested was CDR-Link (report available), a secure, open source help desk application for organizations that run digital security help desks for communities facing authoritarian censorship and surveillance. The helpdesks run via Signal, Telegram, and WhatsApp channels, and provide organizations with a dashboard from which they can streamline responses to support requests. I will dig into the most critical vulnerability we found, a zero click account takeover via SSRF later in the post.

Disclosure: The vulnerability that I missed

Part of my exploration into using AI for security has focused on vulnerability research, or pentesting. Specifically I have been interested in what the limit is for frontier AI models in discovering vulnerabilities in code. To really dig into it, I decided to scan open source applications that I have pentested (specifically the commit I pentested) since I know the code and what vulnerabilities they hold. One of the systems was Uwazi.

I scanned the system using a pentesting harness I built, and it did find most vulnerabilities from the pentest. I must say, I was surprised and really impressed! I looked specifically at the findings related to the password reset functionality, and it had found the same critical issue with the predictable reset token. But on top of that, it had discovered one more critical issue.

Looking back at the code,

Always use a secure random function to generate password reset tokens!

Always use a secure random function to generate password reset tokens!

The vulnerability that AI missed

Conclusion

Thanks

- Uwazi

- Assured